You have a large ecommerce website.You want to make small incremental improvements to the performance of the website. You can measure the impact via an increase in profits. Everything sounds pretty simple. Just run small experiments on everything from the user experience, pricing, pay-per-click ads etc. when you see something working do more of it. If things aren’t working then try something else.

This is age-old marketing know-how. I’ve seen this approach being used in direct-marketing since the start of my career. This is the beauty of digital. We can measure everything. Not like stodgy old media. But are these assumptions true?

Lets consider a simple model. The experiment could be anything from a new online ad campaign, an A/B test around button positioning or a good old fashioned bit of discounting. For the purpose of this discussion it doesn’t matter. We have a large customer base. We measure success based on a influencing the customers behaviour. We can expect a very low conversion rate. We also have a low cost total cost for the experiment.

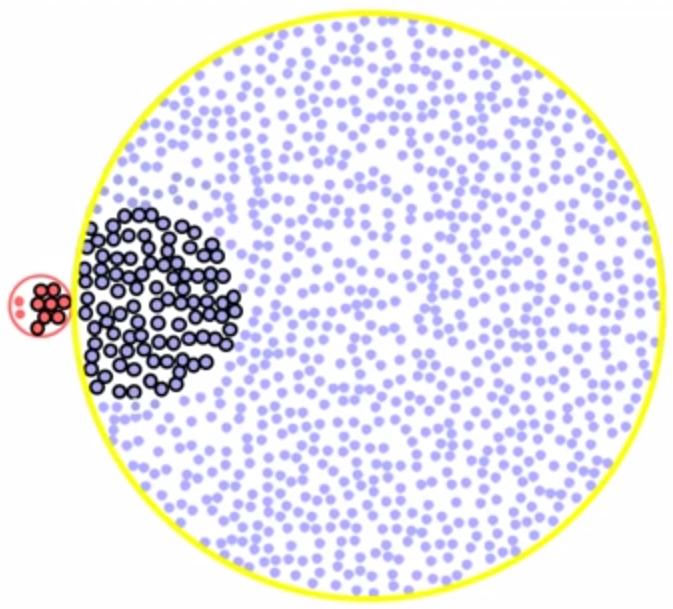

We begin with a big cohort of customers. We then split these out into those who we were able to positively influence, and those who we didn’t and had a negative effect on. The second group were never going to buy, or were going to buy anyway. In each group we then consider the accuracy of our measurements, are the results we measure true or false.

This gets confusing really quick. So please stay with me.

When calculating our ROI the measurement we need to get a count of all the positives. This count is made up of two types. The true positives (i.e. people we correctly measure as being influenced by our actions) and false positives (i.e. people who weren’t influenced but because of inaccuracy in the measurement methods we think we’re).

Let’s assume we have a cohort of 100,000 customers. We have a 1% error rate in measuring false positives (people who weren’t actually influenced). Let’s also assume the true influence rate is 5%.

- True Positives = 100,000 x 5% x 99% = 4950

- False Negatives = 100,000 x 5% x 1% = 50

- True Negatives = 100,000 x 95% x 99% = 94050

- False Positives = 100,000 x 95% x 1% = 950

So our test results give the following.

- Positives = 5,900 = 18% error

- Negatives = 94100 = 1% error (as expected)

This is pretty worrying. We could easily be making a decision based on an ROI of 20%, while actually with a small error rate of just 1% that results are break-even.

Let’s consider some real world examples and some possible strategies for avoiding this effect (known as the False-Positive Paradox).

The first is a pay-per-click campaign. So here we just pay for clicks. Tracking purchases is pretty straight-forward most analysis tools give you revenue figures. However it is going to be pretty hard to measure definite cause and effect here unless we adopt a more scientific approach. Ideally we would have a pre-defined cohort of users to whom we show the advert, then we can measure real influence by comparing users who find the site organically vs. those clicking on the ad. Given most reporting tools don’t do this I’d argue the error rate here is much higher than our illustration of 1%. Ideally use cohorts, if not ensure you’re ROI barrier is raised high enough to lift you out of danger.

Next let us consider A/B testing of a new design. In this case we are running an experiment using a typical javascript based tool. We are looking for justification for doing some change to the platform. So it is the cost of the proposed changes to the platform we need to consider. Now in this example we can expect a little more scientific approach from the start. We are running the test in parallel which will remove a lot of noise from the results (for example a sale starting during the test period will have the same effect on both groups). However unlike the campaign example here are measurement is not based on absolute sales. We’re looking at a shift in buying patterns. The customers who didn’t buy something with the old design (A) who will now buy something with the new design (B). So unless you have a landslide victory with the new design take care.

The final example is implementing personalisation logic. In this case we are segmenting our customer base and to a certain group showing some different content. Again if carried out using A/B logic the results are more scientific. However in analysis of these kind of rules generally will only show the sales figures of the segmented group of users and any uplift seen in this group against the norm. If the rate of ‘influence’ is low then we can expect errors. To avoid this case personalisation rules should again lead to much higher influence rates. In a word keep it simple, creating multiple highly targeted rules based on non-cohort based analysis may be unwise.

References